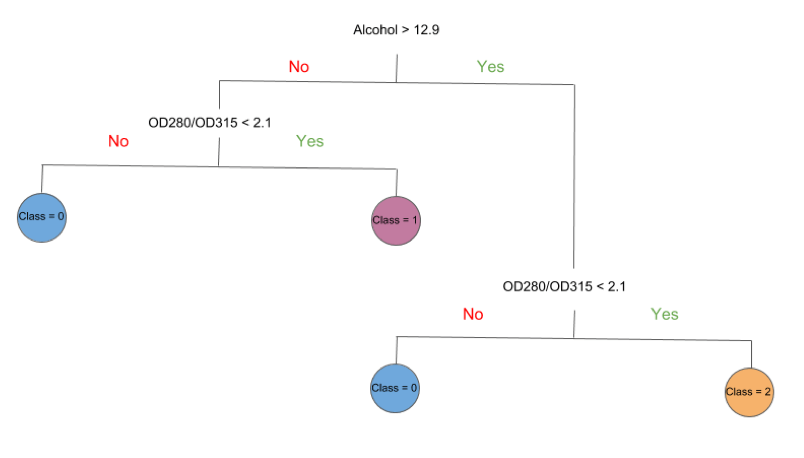

If explainability is a priority, then decision trees are the way to go. If you have a lot of data and need a highly-accurate model, but don’t care as much about explainability, then multi-layered neural networks may be your best bet. Selecting the right machine learning model can be tricky, but it’s all based on your goals. Elastic NetĮlastic Net is an alternative to Least Squares Regression, where elastic-net attempts to discard less important variables and also reduce overfitting.Īs with other “alternative” solutions, elastic-net is more complex, and thus less explainable, than the standard solution. This can be more accurate than Random Forest, but note that Gradient Boosting is more sensitive to overfitting, takes longer to train (because trees are built sequentially), and is harder to tune. The difference is that Gradient Boosting builds decision trees one at a time, rather than independently, to correct errors made by previous trees. Gradient Boosting is very similar to Random Forest, in that it’s an ensemble method that creates many decision trees. Logistic regression is similar to the linear regression we all learned in grade school - y=mx+b - except it’s used when the independent variable is a class, like “yes” or “no,” instead of a number. While in kNN, “k” refers to the number of nearest neighbors, the “k” in k-Means refers to the number of clusters. KNN is used for labelled data, which makes it a “supervised learning” problem, while k-Means is used for unlabelled data, making it an “unsupervised learning” problem. K-Means, while similar sounding to kNN, is a completely different algorithm. If K is 5, then the 5 “nearest neighbors” are three blue squares and two red triangles, so it’ll be classified as a blue square. If K is 3, then the 3 “nearest neighbors” are two red triangles and one blue square, so it’ll be classified as a red triangle. In the above example, the goal is to classify the green circle as one of two classes, which are symbolized as either the blue squares or the red triangles. k-Nearest NeighborsĪs the name implies, the k-Nearest Neighbors (kNN) algorithm works by assuming that a given data point is similar to the nearest K points, where K is roughly the square root of the number of data points. They’re far less commonly used nowadays, and are naturally less accurate, but more explainable, than multi-layered neural networks, making them suitable for very small datasets. Unlike “deep learning,” which has many hidden layers, a perceptron has just one hidden layer. PerceptronĪ perceptron is the simplest form of a neural network. They’re generally a lot more accurate than simpler models, especially when using big data. However, neural networks are powerful because of their complexity, and have an extremely wide-range of use-cases. When people talk about “black box AI,” they’re referring to deep learning, because we don’t intuitively understand how complex algorithms work, in contrast to something like a decision tree. The most common phrase you’ll hear is “deep learning,” which refers to neural networks with many layers.Įssentially, these are compound mathematical functions that make predictions by finding parameters that minimize error. Multi-layered neural networks are at the heart of state-of-the-art AI. In comparison to Random Forest, Naive Bayes is less likely to overfit. Naive Bayes is often used for tasks like spam filtering, text classification, sentiment analysis, and recommender engines. Given probabilities of certain events, you can estimate the probability of another event. Naive Bayes is a classification method that uses probability theory to make decisions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed